Facilities

Transnational Access

Metadata & Data

Papers & Reports

Knowledge Base

Data Quality Assurance and Quality Control

Knowledge Base

Data Quality Assurance and Quality Control[edit]

Abstract: This deliverable is a Standard Operating Protocol (SOP) that describes the methods for data quality assurance and quality control (QA/QC). It defines terms and sets out guidelines for workflow. It then describes practical processes for quality assurance and a range of tests for quality control, including suggestions for flagging systems and data handling.

Keywords: Quality assurance, Quality control, flagging

Definitions and terms[edit]

Flags: A system to identify the quality of data which preserves the original data and indicates the degree of manipulation

Metadata: Contextual information to describe,understand and use a set of data.[1]

QA & QC: Quality Assurance and Quality Control is a two-stage process aiming to identify and filter data in order to assure their utility and reliability for a given purpose

QA: Quality Assurance (process-oriented) Is process-oriented and encompasses a set of processes, procedures or tests covering planning, implementation, documentation and assessment to ensure the process generating the data meet a set of defined quality objectives.

QC: Quality Control (product-oriented) Is product-oriented and consists of technical activities to measure the attributes and performance of a variable to assess whether it passes some pre-defined criteria of quality.

Cross reference[edit]

All other SOPs provided by AQUACOSM in which data are collected should refer to this SOP in relation to QA procedures.

Materials and Reagents

- Software as Excel, R, SPSS, SAS, Systat or other statistical programs. QC procedures may also be built into database functionality.

Health and safety regulation[edit]

Not relevant.

Environmental indications[edit]

Not relevant.

Quality Assurance and Quality control Workflow[edit]

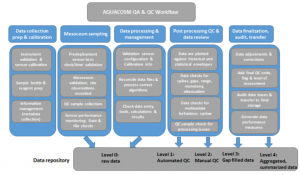

The purpose of quality assurance and quality control is to ensure the reliability and validity of the information content of the data. Many QA & QC measures can be undertaken; however, a ubiquitous and crucial characteristic is that each step is documented, described and repeatable. Documentation is the key to make data reliable, valuable[2][3]. The figure below describes the recommended workflow for AQUACOSM data collection.

Based on the level of quality assurance and control steps the raw data has undergone, we distinguish four data levels:

level 0 - raw data

level 1 - automated QC - large obvious errors removed

level 2 - manual QC

level 3 - Gap filled or Interpolated data

level 4 - aggregated and summarized data

Quality assurance of raw data collection[edit]

The quality of the data to be collected in the mesocosm experiment will be improved when the data collection preparation and mesocosm sampling undergoes several quality assurance steps. These quality assurance steps differ for the type of data collected, and more detailed information on quality assurance for specific sampling procedures can be found in the SOPs for manual data collection (zooplankton, water chemistry, phytoplankton, periphyton, protozoa, bacteria, archaea, viruses etc). See above for examples, including sensor calibration, and adequate labelling of sampling containers.

In addition to method-specific quality assurance steps as described in the specific SOP's, prior to collecting the data, one should define standard names for common objects. This should be done for at least the following cases:

- Parameter name: use standardized names that describe the content and describe the parameter in the metadata [4] [2] [5]. As a best practice advice: use the vocabulary developed during the AQUACOSM project with the standardized names.

- Formats: choose a format for each parameter, describe this format in the metadata and use it through the whole dataset. Important formats to consider are dates, times, spatial coordinates and significant digits. [4][5]

- Taxonomic nomenclature. Follow international species data list.

- Measurement units: make use of the SI units (and the AQUACOSM vocabulary) and document these units (in the metadata).[4] [5]

- Codes: “standardized list of predefined values”. Determine which codes to use, describe the codes and use the codes consistently. Every change that is made in the codes, should be documented. [4][5]

- Metadata: data about data, with as goal to help scientists to understand and use the data [4]. The mesocosm metadatabase developed in Aquacosm (link) is currently build on the Ecological Metadata Language (EML, see Fegraus et al. 2005), specifically adapted to mesocosm data.

Another important point of QA is to assign the responsibility for data quality to a person or persons who has some experience with QA & QC procedures.[4]

Quality Control[edit]

Raw or primary data should not be removed or changed unless there is solid evidence that it is erroneous. In the first instance questionable data should be flagged according to international code system (e.g. ICES or the like). In the event that primary data are altered it must be saved and a motivation for the action added in the same post. It is essential that the raw, unmanipulated form of the data is saved so that any subsequent procedures performed on the data can be repeated [6]. Instead of removing or deleting data it is preferable to use a system of flags, via a range of QA processes and steps, thereafter QC can be carried out by filtering the data based on the flags and further analysis carried out.

The general QC checks that should be done are described in detail in [7], this can be done manually or automated, the latter being more applicable to high-frequency data:

- Gap or missing value check: do you have all the expected results?

- Control samples: Are they within expected range and variability?

- Calibration curves: Are coefficients within expected range and variability?

- Spurious/impossible results check: do you have negative results or results with an unreasonable magnitude?

- Outlier check: do the results fall outside an expected distribution of the data?

- Range check: do the calculated values fall within the expected range and variability?

- Climatology check: are the results reasonable compared with historical results (range and patterns)?

- Neighbour check: are the results reasonable compared to results of the same site, same day or different depths?

- Seasonality check: do results reflect seasonal processes or effects or are they extraordinarily different?

For a sensor network QC should include[6] :

- Date and time: check if each data point has the right date and time.

- Range: check if data fall within established upper and lower bounds

- Persistence: check if the same value is recorded repeatedly, this can indicate problems like a sensor error or system failure.

- Change in slope: check the change in slope to see if the rate of change is realistic for the type of data collected

- Internal consistency: evaluate differences between related parameters

- Spatial consistency: check replicate sensors or compare the sensors with identical sensors from another site.

After the QC check one should evaluate data points that did not pass the QC check. Compare with other variables, check calibration curves and other types of sampling and instrument performance. Check log books for comments by the responsible person. Only when evidence for sampling error, contamination or instrument failure is shown should data point be removed or replaced with a, for example interpolated value. The decision and its motivation must be documented and the data point flagged accordingly.

Flagging[edit]

This SOP states that to establish successful QC procedures it is crucial to flag your data to explain the differences between the raw data and processed data. “Flags or qualifiers convey information about individual data values, typically using codes that are stored in a separate field to correspond with each value” [6]. We recommend using the flagging values as suggested by Hook et al. [5], as it allows you to retrieve the QC steps your data has undergone.

Comment: other possible flagging systems are: Campbell et al. [6], Marine water website[8], QARTOD flagging system [9], IODE flagging system by Reiner Schlitzer[10] and protocols produced EU infrastructure projects the Copernicus Marine Environment Monitoring Service (CMEMS)[11]. Table 1-1 suggests a flagging system that may be useful for mesocosm applications. It is more complicated than some systems but it contained information of why a data point is questionable and allows filtering of the data with different stringency of quality control.

Table 1-1: Recommend flag values [5] for the AQUACOSM project

| Flag Value | Description |

|---|---|

| V0 | Valid value |

| V1 | Valid value but comprised wholly or partially of below detection limit data |

| V2 | Valid estimated value |

| V3 | Valid interpolated value |

| V4 | Valid value despite failing to meet some QC or statistical criteria |

| V5 | In-valid value but flagged because of possible contamination (e.g., pollution source, laboratory contamination source) |

| V6 | In-valid value due to non-standard sampling conditions (e.g., instrument malfunction, sample handling) |

| V7 | Valid value but set equal to the detection limit (DL) because the measured value was below the DL |

| M1 | Missing value because not measured |

| M2 | Missing value because invalidated by data originator |

| H1 | Historical data that have not been assessed or validated |

Table 1-2: The ARGO data quality flagging system

| Flag Value | Description |

|---|---|

| 0 | No data quality control on data |

| 1 | Data passed all tests |

| 2 | Data probably good |

| 3 | Data probably bad. Failed minor tests |

| 4 | Data bad. Failed major tests |

| 7 | Averaged value |

| 8 | Interpolated value |

| 9 | Missing data |

Table 1-3: ICES Data Quality Flag

| Flag Value | Description |

|---|---|

| 0 | data are not checked |

| 1 | data are checked and appear correct |

| 2 | data are checked and appear inconsistent but correct |

| 3 | data are checked and appear doubtful |

| 4 | data are checked and appear to be wrong |

| 5 | data are checked and the value has been altered |

Automated QC[edit]

The large increase in the number of studies using multiple sensors recording at high frequency has led to the generation of large volumes of data, making it extremely difficult and undesirable to carry out manual QC. A number of automated methods have been developed for quality control. Visual checks of such data are relatively straightforward, if time-consuming. Therefore, it is increasingly desirable to automate these approaches, which ideally would be integrated into database functionality, for example through the use of R or Python code, adapted to the particular data set. These QC steps can be applied in real-time or post-data collection. Real-time quality control (RTQC) has the advantage that an alarm system can be integrated to indicate suspect values providing an early warning of sub-optimal sensor performance. These methods follow many of the same QC methods as manual methods, in particular:

- Date and time: this checks if each data point has the right date and time.

- Range: this checks if data fall within established upper and lower bounds can be divided into Global range tests (possible distribution) and local range tests (probable distribution)

- Persistence: or frozen value test - this checks if the same value is recorded repeatedly, this can indicate problems like a sensor error or system failure.

- Change in slope or spike test: this checks the change in slope to see if the rate of change is realistic for the type of data collected

- Internal consistency: this evaluates differences between related parameters

- Spatial consistency: this checks replicate sensors or compare the sensors with identical sensors from another site.

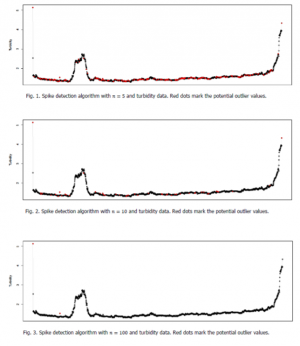

Parameters measured by autonomous sensors can vary on a range of scales and a number of sensor types, in particular optical sensors, can be affected by non-negligible noise. There are a number of methods which can be used to identify outliers or spurious data that can then be flagged accordingly and filtered out of subsequent analysis. One approach [11] has been to apply a procedure testing the statistical entropy caused by each progressive measurement, as described by [12], this is a 2-step estimation of the Akaike information criterion - details can be found in [12]. This approach to outlier or spike identification is highly dependent on the number of sample points considered in the estimate of statistical entropy. The inclusion of too few samples risks the exclusion becoming too sensitive with ‘good’ data being excluded. The inclusion of too many samples and the test may potentially become too insensitive (Figure 1-2). In addition, the designation of an observation as an outlier is also dependent on the selection of a cut-off or critical value of variation above which a value is designated as an outlier. The selection of these two parameters for the test is dependent upon the type of data being collected. For example, the data generated by the Ferrybox [11] are collected 400 m apart and in this case, it was necessary to relax the criteria used for identifying outliers as their natural variation in samples 400 m apart is greater than samples measured in the same location.

A similar approach to identification of outliers in sensor data has been developed at the University of Waikato, New Zealand (https://www.lernz.co.nz/tools-and-resources/b3) and now also available as an R package (https://github.com/kohjim/rB3) , under the aegis of the GLEON network. This is a freely available, downloadable programme that can be used for post-data collection data processing. B3 is an integrated programme that can be used to carry out many of the QC steps outlined above, e.g. range check, missing data, repeated values. It also identifies outliers or spikes samples by two methods. The first is similar to the above described method, where a running mean is calculated and observations that fall outside a critical standard deviation can be identified as potential outliers. Both the period over which the running mean is calculated and the cut off value of the standard deviation can be altered to suit the dataset in question. The second method uses a rate of change analysis (ROC) to identify jumps in the data that are out of the normal range. The number of data points used to identify the ‘normal’ range can be altered as can the critical rate of change value above which a value is deemed a potential outlier. Each of these methods has different sensitivities to outliers, depending on the kind of data analysed and there is a balance to strike between excluding ‘good’ data and including ‘bad’ data. For example, data with a large diurnal range (e.g. DO data) may be vulnerable to excluding good data at the high and low end of the diurnal cycle when the running mean methods are used. Thus, it may be necessary to tailor the cut off values for ROC analysis and running mean analysis for each dataset, or even each mesocosm and some form of manual QC is highly recommended.

Aggregated, summarized data[edit]

As a final step, data can be aggregated and summarized for publication purposes. This includes integration over e.g. depth (space) and time. It should be remembered that integration however reduce the degrees of freedom and may limit the statistical tests that can be performed and possibly the statistical power.

Aggregation is preferably done in a database environment with the integrating function located at one instance. This function should be validated by manual calculation with selected data from the same set.

The start and end values for the range of integration should be clearly defined, as is true for methods for inter- and extrapolation where applicable.

Number of samples included in the integrated value (n) and its standard deviation (±SD) can be provided to assess the extent of data for the aggregated value.

References[edit]

- ↑ Jones, M.B., et al., The New Bioinformatics: Integrating Ecological Data from the Gene to the Biosphere. Annual Review of Ecology, Evolution, and Systematics, 2006. 37(1): p. 519-544.

- ↑ 2.0 2.1 Rüegg, J., et al., Completing the data life cycle: using information management in macrosystems ecology research. Frontiers in Ecology and the Environment, 2014. 12(1): p. 24-30.

- ↑ Michener, W.K., Ecological data sharing. Ecological Informatics, 2015. 29: p. 33-44.

- ↑ 4.0 4.1 4.2 4.3 4.4 4.5 Michener, W.K. and M.B. Jones, Ecoinformatics: supporting ecology as a data-intensive science. Trends in Ecology & Evolution, 2012. 27(2): p. 85-93.

- ↑ 5.0 5.1 5.2 5.3 5.4 5.5 Hook, L.A., et al., Best practices for preparing environmental data sets to share and archive. 2010, Oak Ridge National Laboratory Distributed Active Archive Center, Oak Ridge, Tennessee, U.S.A. p. 40.

- ↑ 6.0 6.1 6.2 6.3 Campbell, J.L., et al., Quantity is Nothing without Quality: Automated QA/QC for Streaming Environmental Sensor Data. BioScience, 2013. 63(7): p. 574-585.

- ↑ Bos, J., C. Krembs, and W.R. Kammin, EAP088 Marine Waters Data Quality Assurance and Quality Control V1.0 5/30/2015. 2015: p. 35.

- ↑ Department of Ecology - State of Washington. Marine Waters Data Quality Codes. [webpage] 2017 [cited 2017 October 17]; Available from: http://www.ecy.wa.gov/programs/eap/mar_wat/datacodes. HTML.

- ↑ Integrated Ocean Observing System. Manual for Real-Time Oceanographic Data Quality Control Flags. 2017 [cited 2017 October]; Available from: https://ioos.noaa.gov/wpcontent/uploads/2017/06/QARTOD-Data-FlagsManual_Final_version1.1.pdf.

- ↑ Schlitzer, R. Oceanographic quality flag schemes and mappings between them. 2013 2013-05-24 [cited 2017 October]; version 1.4:[Available from: https://odv.awi.de/fileadmin/user_upload/odv/misc/ODV4_QualityFlagSets.pdf.

- ↑ 11.0 11.1 11.2 11.3 Jaccard Pierre, Hjemann Dag Oystein, Ruohola Jani, Ledang Anna Birgitta, Marty Sabine, Kristiansen Trond, Kaitala Seppo, Mangin Antoine (2018). Quality Control of Biogeochemical Measurements. CMEMS-INS-BGC-QC. https://doi.org/10.13155/36232

- ↑ 12.0 12.1 12.2 Ueda, T. 2009. A simple method for the detection of outliers. Electronic Journal of Applied Statistical Analysis 2:67-76.